RAG Chatbot with Groq API¶

- Overview of Retrieval-Augmented Generation (RAG)

- Notebook Content

- Required API Keys

- Creating a Hugging Face Access Token

- Creating a Groq API Key

- Creating an OpenAI API Key

- Store API Keys as Secrets in Google Colab

- Using LLMClient in the Notebook

- Resources for RAG

- License

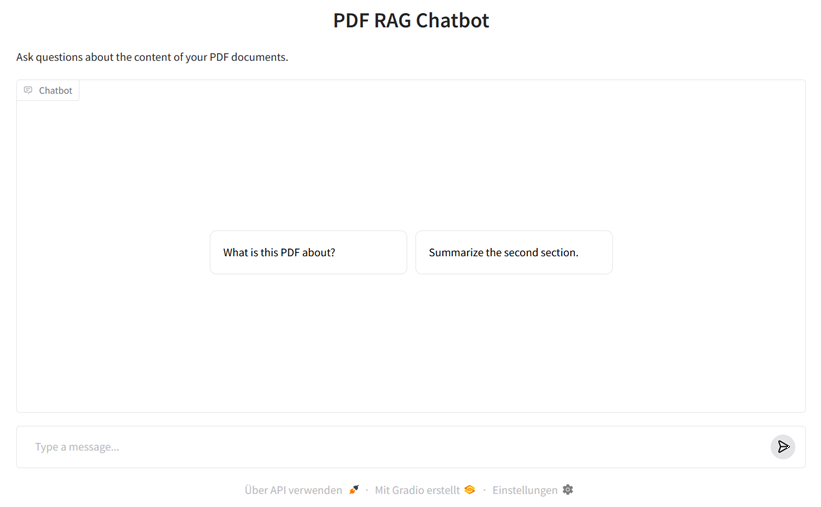

The notebook RAGChatbot_groq_API.ipynb demonstrates how to create a Retrieval-Augmented Generation (RAG) chatbot using the LLMClient class, which can be operated via Groq, OpenAI, or Ollama.

Overview of Retrieval-Augmented Generation (RAG)¶

Retrieval-Augmented Generation (RAG) combines knowledge from your own documents with the linguistic competence of large AI models like ChatGPT. Instead of the model only accessing its internal (and limited) training knowledge, RAG first searches specifically in a knowledge base or document collection for relevant text passages ("Retrieval") and then passes these along with the user query to the Large Language Model ("Generation").

This allows the system to provide up-to-date, verifiable, and context-related answers – e.g., based on PDF reports, research articles, or internal documentation. Typical use cases include knowledge-based chatbots, intelligent assistance systems, or internal corporate knowledge assistants.

The following diagram shows the basic structure of a RAG system:

Figure: "High-level overview of the Retrieval Augmented Generation System" by Maanjunath S Naragund, taken from this blog post on Medium. Icons by Flaticon. Used under the right of quotation (§ 51 UrhG). This figure is not under the MIT license of this repository.

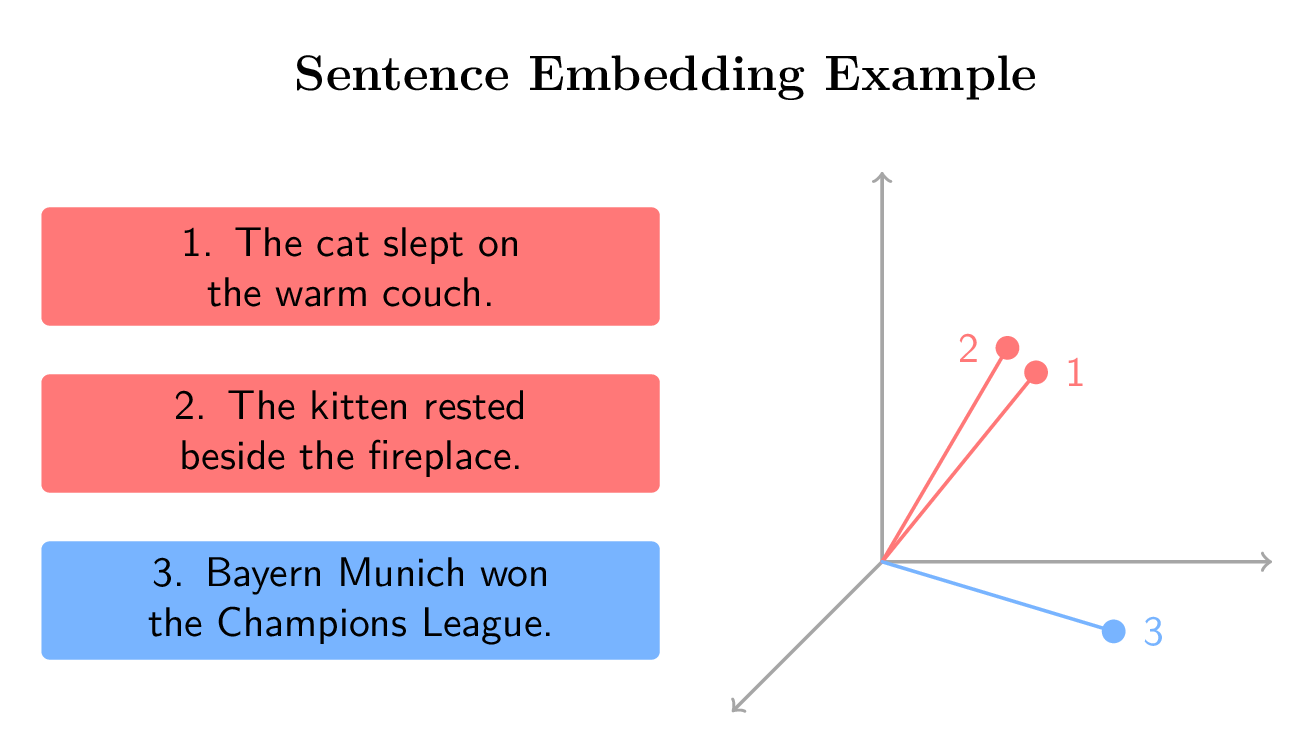

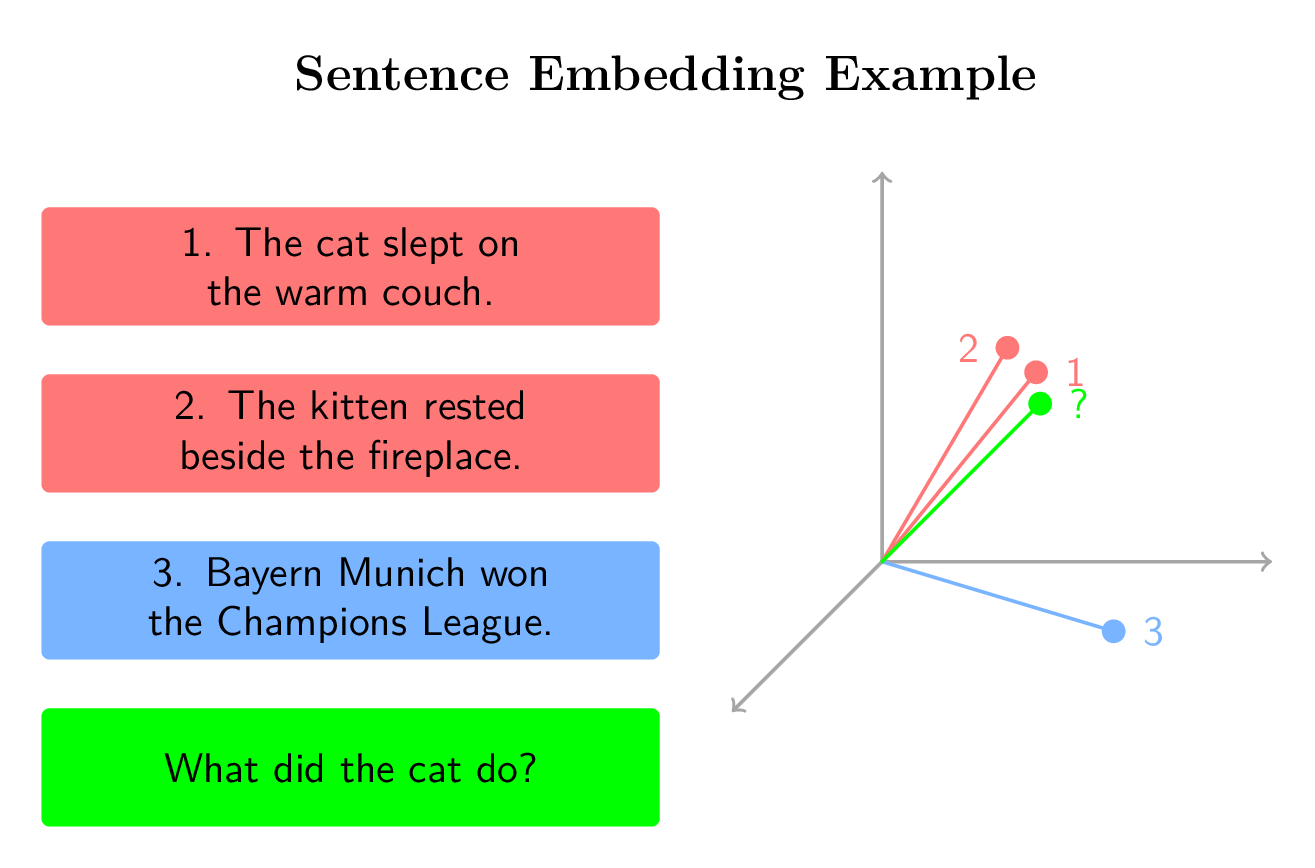

The following two figures illustrate how sentence embeddings represent the semantic meaning of sentences in a shared vector space. Sentences with similar meanings (e.g., paraphrases) are mapped as closely located vectors, while content-wise different sentences lie further apart. So-called embedding models (a form of LLM) convert sentences into these numerical vectors that make the semantic properties of the sentences mathematically graspable.

The first figure shows three example sentences and their embeddings in a three-dimensional space – two semantically similar sentences (in red) and one thematically independent sentence (in blue).

Figure: Visualization of semantic similarity of sentence embeddings in a three-dimensional vector space. Own representation, inspired by course material from "Retrieval Augmented Generation (RAG)" by DeepLearning.AI on Coursera.

The second figure extends this example with a question vector and demonstrates how semantic similarity can be used to retrieve relevant information in a Retrieval-Augmented Generation system.

Figure: Visualization of semantic similarity of sentence embeddings in a three-dimensional vector space including a question. Own representation, inspired by course material from "Retrieval Augmented Generation (RAG)" by DeepLearning.AI on Coursera.

🚀 Notebook Content¶

The notebook demonstrates:

- Installation of required packages in Google Colab

- Using the

LLMClientclass with: - 🧩 Groq API (optional)

- 🔮 OpenAI API (optional)

- 💻 Ollama (local) (Fallback)

- Building a simple RAG workflow:

- Loading PDF documents with

UnstructuredReaderfromllamaindex - Generating embeddings with an embedding model from

Hugging Face - Combining answers from LLM +

ChromaDBvector database

🔑 Required API Keys¶

| Service | Required | Purpose |

|---|---|---|

| Hugging Face Access Token | ✅ required | Download the embedding model for local execution |

| Groq API Key | optional | Use the Groq LLM API |

| OpenAI API Key | optional | Use the OpenAI LLM API |

If neither Groq nor OpenAI key is set, LLMClient automatically falls back to Ollama (works only locally and not in Google Colab).

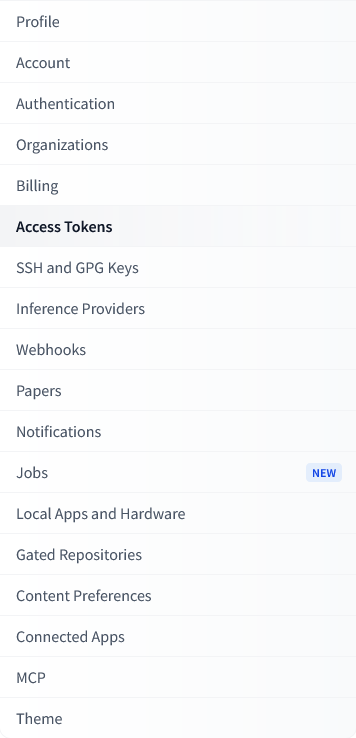

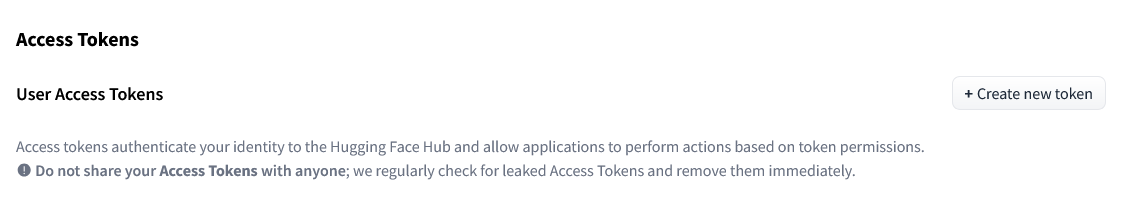

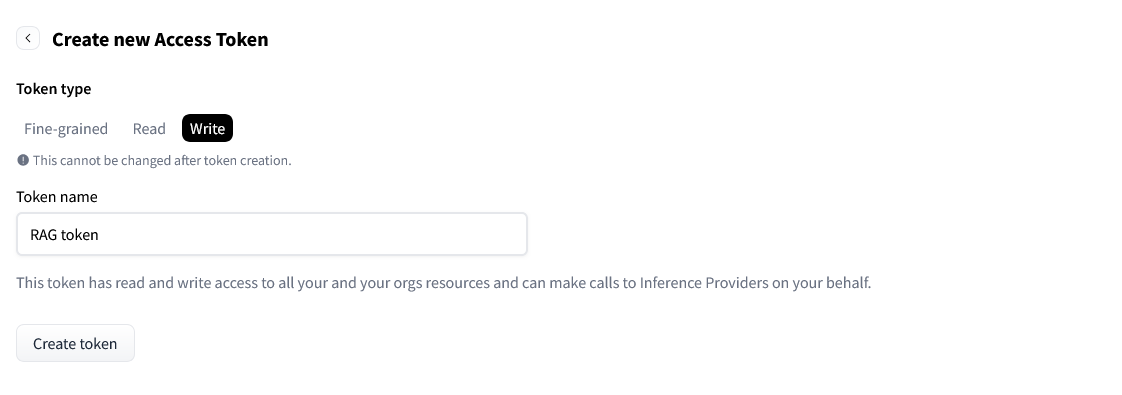

🦮 Creating a Hugging Face Access Token¶

The Hugging Face Access Token is required to access embedding models and other AI models from the Hugging Face Model Hub, which are used to calculate sentence embeddings. These are downloaded from the Model Hub and executed locally.

-

Create a free account at https://huggingface.co/ or log in (if necessary).

- Click on the "Create new token" button

- Enter a name (e.g.,

colab-rag) and select Type: Write

- Copy the displayed token (usually starts with

hf_...).

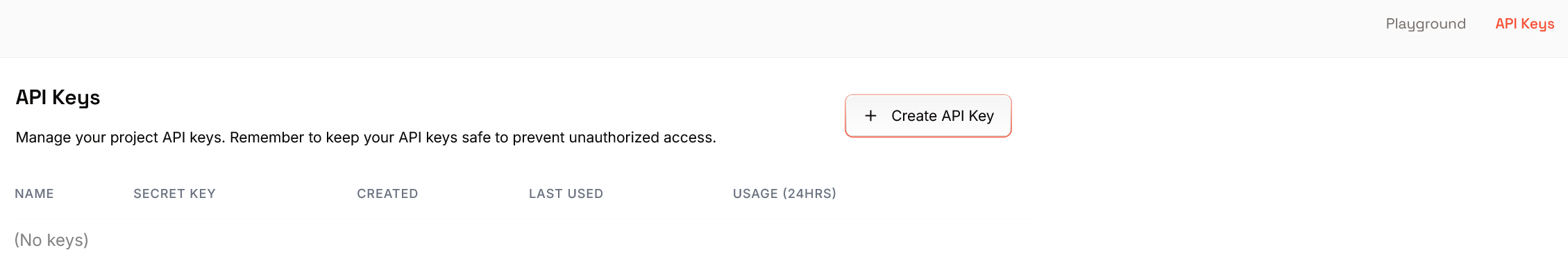

⚡️ Creating a Groq API Key¶

The Groq API Key allows access to publicly available LLMs that can be used for particularly fast text generation and question answering in the RAG workflow. These LLMs are executed in the GroqCloud.

- Create a free account at https://groq.com/ or log in (if necessary).

- Visit https://console.groq.com/keys

- Click on "Create API Key"

- Copy the key (usually starts with

groq_...).

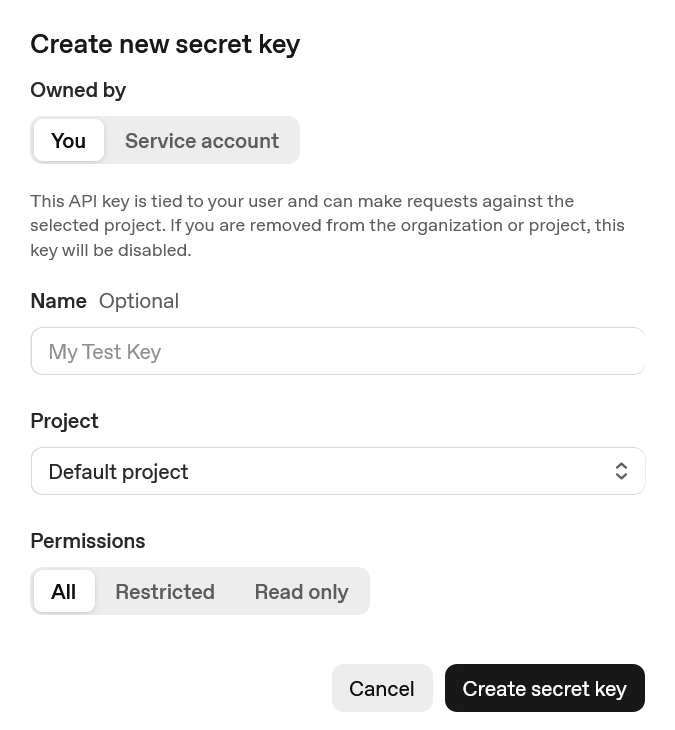

🔮 Creating an OpenAI API Key¶

The OpenAI API Key allows the use of OpenAI models (e.g., GPT-4 or GPT-4o) to generate context-related answers in the Retrieval-Augmented Generation system.

- Click on "Create new secret key"

- Copy the key (usually starts with

sk-...).

Creating a Google Gemini API Key¶

- Visit Google AI Studio

- Click on "Get API Key" or "Create API Key"

- Select a Google Cloud project or create a new one

- Copy the generated API key (starts with

AIzaSy...)

Note: The Gemini API is accessed via the OpenAI compatibility mode, therefore only the openai Python package is required.

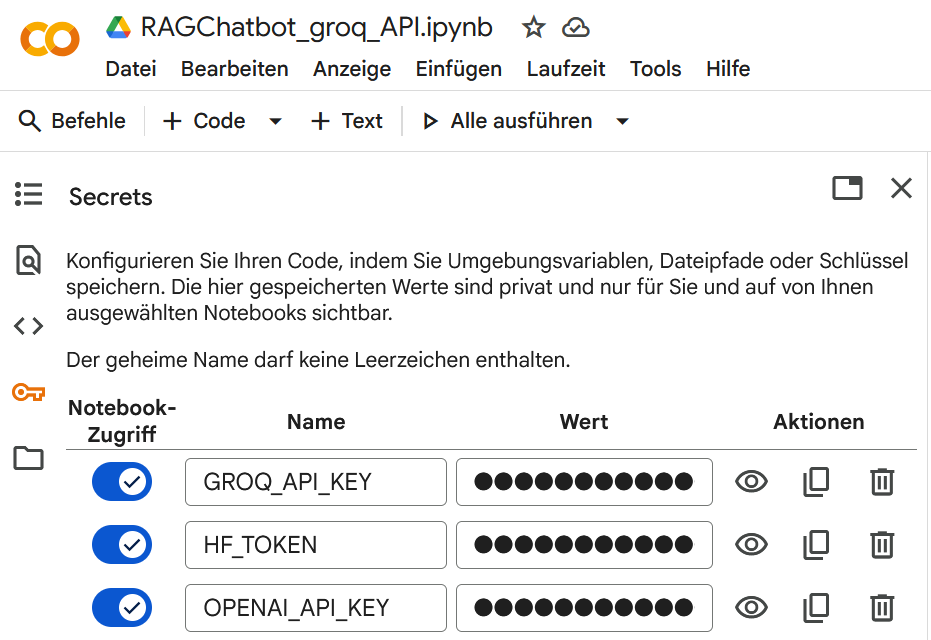

☁️ Store API Keys as Secrets in Google Colab¶

- Click on the key symbol 🔑 in the menu on the left

- Create the following secrets:

| Name | Value |

|---|---|

HF_TOKEN |

your Hugging Face Access Token |

GROQ_API_KEY |

(optional) your Groq API Key |

OPENAI_API_KEY |

(optional) your OpenAI API Key |

⚙️ Using LLMClient in the Notebook¶

from llm_client import LLMClient

# LLMClient automatically detects which keys are set

client = LLMClient()

print("Used API:", client.api_choice)

print("Model:", client.llm)

If no Groq or OpenAI key is found, the client automatically falls back to Ollama (local operation).

Resources for RAG¶

Coursera Course on Retrieval Augmented Generation (RAG) by DeepLearning.AI

🧩 License¶

This notebook is part of the repository dgaida/llm_client. © 2025 – Daniel Gaida, Technical University. Licensed under the MIT License.